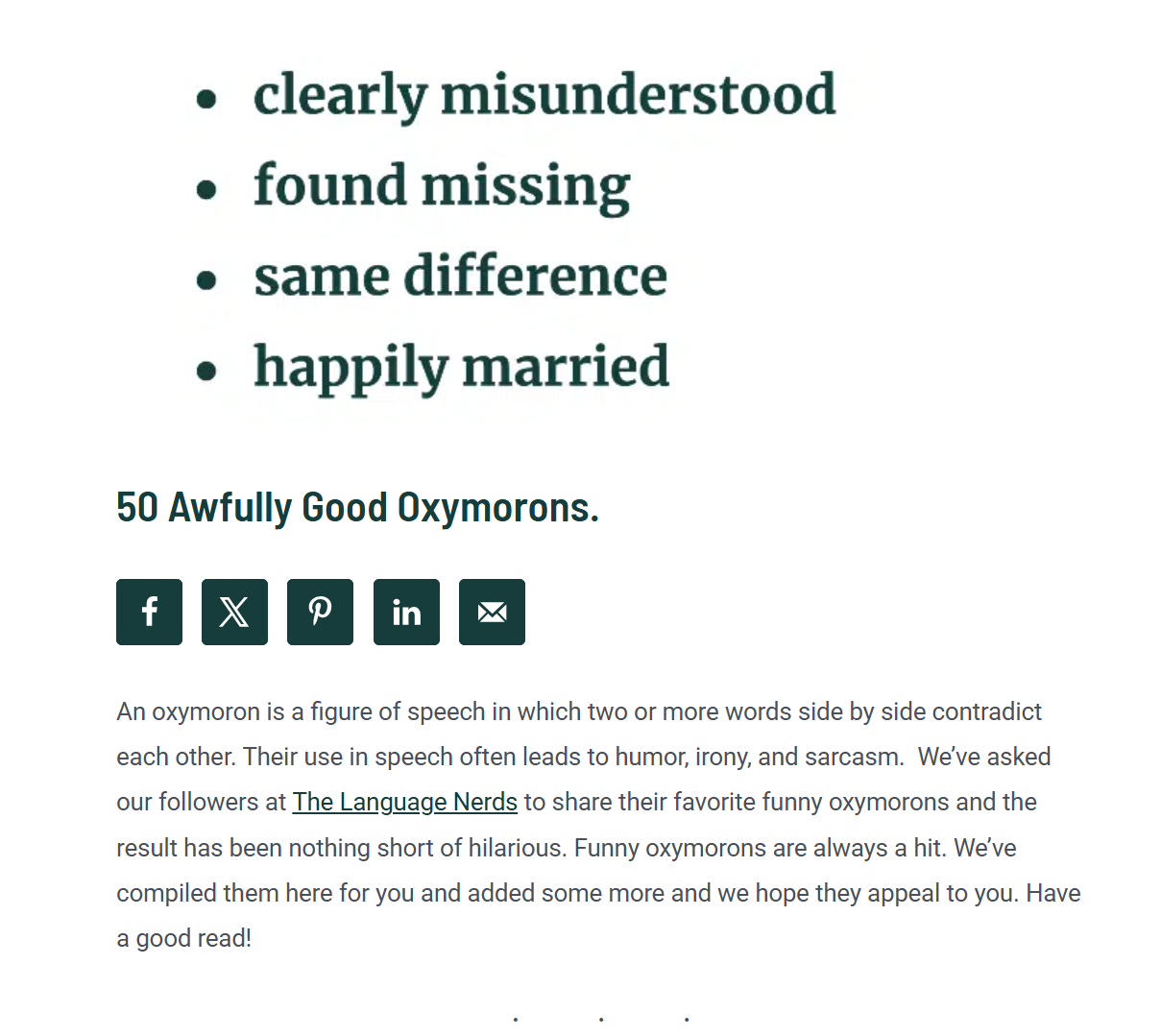

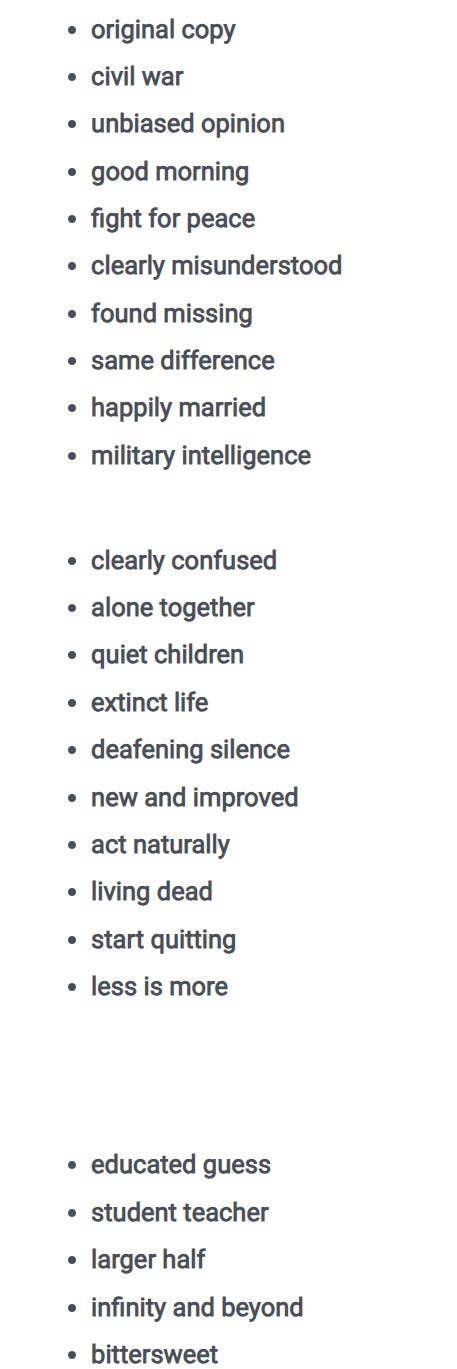

Oxymorons

Oxymorons

The Big Takeaway From Google’s AI Climate Report

For the climate concerned, the rise of the AI-reliant internet query is a cause for alarm. Many people have turned to ChatGPT and other services for simple questions. And even basic Google searches include an AI-derived result.

Depending on how you crunch the numbers, it’s possible to come up with a wide range for the energy usage and related climate cost of an AI query. ChatGPT provides the estimate of up to 0.34 watt-hours per prompt, equivalent to using a household lightbulb for 20 seconds, while one set of researchers concluded that some models may use as much as 100 times more for longer prompts.

On Thursday, Google released its own data: the average search using Gemini—the company’s ubiquitous AI tool—uses 0.24 watt-hours. That’s equivalent to watching about nine seconds of TV. Such a search emits 0.03 grams of carbon dioxide equivalent. Perhaps more interesting is how Google says Gemini text queries have become cleaner with time. Over the last year, energy consumption per query has decreased around 97% while carbon emissions have decreased by 98% per query, the company said. A separate report from Google released earlier in the summer showed a decoupling of data center energy consumption and the resulting emissions. (It’s worth noting, of course, that simple text queries are less intensive than other functions like image, audio, or visual generation, and these figures don’t include training of models—numbers that aren’t included in Google’s report given the challenges of accurately calculating them).

Whether such a downward trajectory can continue is a crucial question for anyone watching the future of energy and climate in the U.S.—with implications not just for the future of U.S. emissions but also for the hundreds of billions of dollars in power sector investments. Across a variety of related industries, leaders will need to try to thread the needle: addressing the growing demand for AI while avoiding overbuilding infrastructure as AI models grow more efficient.

Google’s progress boils down to two levers: cleaner power and more efficient chips and query crunching.

The clean energy strategy is impressive, but fairly straightforward. The company buys a lot of renewable energy to power its operations, signing contracts to buy 8GW of clean power last year alone. That’s equivalent to the capacity of 2,400 utility-scale wind turbines, according to Department of Energy numbers. Going forward, the company has invested in helping bring other future clean technologies like nuclear fusion online.

But then there’s the company’s efficiency measures. In energy circles, efficiency tends to refer to simply using less energy and making energy hardware run more productively—think of climate control or better insulation. While Google has done some of that, the most impressive efficiency gains have come through the AI ecosystem rather than the energy system. The company has created its own chips—which it calls TPUs, as opposed to broadly used GPUs. Those chips have become more efficient over time—some 30 times more efficient since 2018, according to Google’s sustainability report. The company has also improved the efficiency of its models using techniques that crunch queries differently, thereby reducing the needed compute power. And a few weeks ago the company announced a program to shift data center demand to times when the electricity grid is less stressed.

The question—not just for Google but for any company deeply invested in AI—is whether those programs and the resulting efficiency gains can continue. Deepened efficiency gains would be a huge climate win—so long as the increase in usage doesn’t outpace the increase in efficiency.

Greater efficiency would also have significant implications across the energy sector. Right now, power companies are betting big on new sources of electricity generation on the assumption that AI will continue driving demand growth. But it’s very hard to predict exactly how fast demand will grow. Prospective efficiency gains are a big reason why, and Google’s results should at least make you pause and consider the known unknown potential.

Read more here.

The Big Takeaway From Google’s AI Climate Report

For the climate concerned, the rise of the AI-reliant internet query is a cause for alarm. Many people have turned to ChatGPT and other services for simple questions. And even basic Google searches include an AI-derived result.

Depending on how you crunch the numbers, it’s possible to come up with a wide range for the energy usage and related climate cost of an AI query. ChatGPT provides the estimate of up to 0.34 watt-hours per prompt, equivalent to using a household lightbulb for 20 seconds, while one set of researchers concluded that some models may use as much as 100 times more for longer prompts.

On Thursday, Google released its own data: the average search using Gemini—the company’s ubiquitous AI tool—uses 0.24 watt-hours. That’s equivalent to watching about nine seconds of TV. Such a search emits 0.03 grams of carbon dioxide equivalent. Perhaps more interesting is how Google says Gemini text queries have become cleaner with time. Over the last year, energy consumption per query has decreased around 97% while carbon emissions have decreased by 98% per query, the company said. A separate report from Google released earlier in the summer showed a decoupling of data center energy consumption and the resulting emissions. (It’s worth noting, of course, that simple text queries are less intensive than other functions like image, audio, or visual generation, and these figures don’t include training of models—numbers that aren’t included in Google’s report given the challenges of accurately calculating them).

Whether such a downward trajectory can continue is a crucial question for anyone watching the future of energy and climate in the U.S.—with implications not just for the future of U.S. emissions but also for the hundreds of billions of dollars in power sector investments. Across a variety of related industries, leaders will need to try to thread the needle: addressing the growing demand for AI while avoiding overbuilding infrastructure as AI models grow more efficient.

Google’s progress boils down to two levers: cleaner power and more efficient chips and query crunching.

The clean energy strategy is impressive, but fairly straightforward. The company buys a lot of renewable energy to power its operations, signing contracts to buy 8GW of clean power last year alone. That’s equivalent to the capacity of 2,400 utility-scale wind turbines, according to Department of Energy numbers. Going forward, the company has invested in helping bring other future clean technologies like nuclear fusion online.

But then there’s the company’s efficiency measures. In energy circles, efficiency tends to refer to simply using less energy and making energy hardware run more productively—think of climate control or better insulation. While Google has done some of that, the most impressive efficiency gains have come through the AI ecosystem rather than the energy system. The company has created its own chips—which it calls TPUs, as opposed to broadly used GPUs. Those chips have become more efficient over time—some 30 times more efficient since 2018, according to Google’s sustainability report. The company has also improved the efficiency of its models using techniques that crunch queries differently, thereby reducing the needed compute power. And a few weeks ago the company announced a program to shift data center demand to times when the electricity grid is less stressed.

The question—not just for Google but for any company deeply invested in AI—is whether those programs and the resulting efficiency gains can continue. Deepened efficiency gains would be a huge climate win—so long as the increase in usage doesn’t outpace the increase in efficiency.

Greater efficiency would also have significant implications across the energy sector. Right now, power companies are betting big on new sources of electricity generation on the assumption that AI will continue driving demand growth. But it’s very hard to predict exactly how fast demand will grow. Prospective efficiency gains are a big reason why, and Google’s results should at least make you pause and consider the known unknown potential.

Read more here.

The Big Takeaway From Google’s AI Climate Report

For the climate concerned, the rise of the AI-reliant internet query is a cause for alarm. Many people have turned to ChatGPT and other services for simple questions. And even basic Google searches include an AI-derived result.

Depending on how you crunch the numbers, it’s possible to come up with a wide range for the energy usage and related climate cost of an AI query. ChatGPT provides the estimate of up to 0.34 watt-hours per prompt, equivalent to using a household lightbulb for 20 seconds, while one set of researchers concluded that some models may use as much as 100 times more for longer prompts.

On Thursday, Google released its own data: the average search using Gemini—the company’s ubiquitous AI tool—uses 0.24 watt-hours. That’s equivalent to watching about nine seconds of TV. Such a search emits 0.03 grams of carbon dioxide equivalent. Perhaps more interesting is how Google says Gemini text queries have become cleaner with time. Over the last year, energy consumption per query has decreased around 97% while carbon emissions have decreased by 98% per query, the company said. A separate report from Google released earlier in the summer showed a decoupling of data center energy consumption and the resulting emissions. (It’s worth noting, of course, that simple text queries are less intensive than other functions like image, audio, or visual generation, and these figures don’t include training of models—numbers that aren’t included in Google’s report given the challenges of accurately calculating them).

Whether such a downward trajectory can continue is a crucial question for anyone watching the future of energy and climate in the U.S.—with implications not just for the future of U.S. emissions but also for the hundreds of billions of dollars in power sector investments. Across a variety of related industries, leaders will need to try to thread the needle: addressing the growing demand for AI while avoiding overbuilding infrastructure as AI models grow more efficient.

Google’s progress boils down to two levers: cleaner power and more efficient chips and query crunching.

The clean energy strategy is impressive, but fairly straightforward. The company buys a lot of renewable energy to power its operations, signing contracts to buy 8GW of clean power last year alone. That’s equivalent to the capacity of 2,400 utility-scale wind turbines, according to Department of Energy numbers. Going forward, the company has invested in helping bring other future clean technologies like nuclear fusion online.

But then there’s the company’s efficiency measures. In energy circles, efficiency tends to refer to simply using less energy and making energy hardware run more productively—think of climate control or better insulation. While Google has done some of that, the most impressive efficiency gains have come through the AI ecosystem rather than the energy system. The company has created its own chips—which it calls TPUs, as opposed to broadly used GPUs. Those chips have become more efficient over time—some 30 times more efficient since 2018, according to Google’s sustainability report. The company has also improved the efficiency of its models using techniques that crunch queries differently, thereby reducing the needed compute power. And a few weeks ago the company announced a program to shift data center demand to times when the electricity grid is less stressed.

The question—not just for Google but for any company deeply invested in AI—is whether those programs and the resulting efficiency gains can continue. Deepened efficiency gains would be a huge climate win—so long as the increase in usage doesn’t outpace the increase in efficiency.

Greater efficiency would also have significant implications across the energy sector. Right now, power companies are betting big on new sources of electricity generation on the assumption that AI will continue driving demand growth. But it’s very hard to predict exactly how fast demand will grow. Prospective efficiency gains are a big reason why, and Google’s results should at least make you pause and consider the known unknown potential.

Read more here.

Science says you need a human transcriptionist!

Listening, in particular, was more demanding. As stories unfolded into complex ideas, listeners recruited a broader set of brain regions involved in memory retrieval, sustained attention, and social cognition. These included areas like the angular gyrus and posterior cingulate cortex, which help link incoming language to stored knowledge, and the medial prefrontal cortex, which supports imagining other people’s thoughts and intentions.

These networks allowed the listener not only to absorb the speaker’s words but to track their meaning over time, integrate it with prior knowledge, and infer intention. Speaking did not require the same level of integration. It remained more localized, focused on generating language and responding to immediate context. This involved regions like Broca’s area in the left frontal lobe, which helps plan speech, and nearby motor areas responsible for controlling the muscles used in speaking.

Cognition

How the Brain Builds Conversations Across Time

Related brain processes—speaking and listening—use distinct systems.

Posted July 14, 2025 | Reviewed by Devon Frye

Key points

-

The brain builds conversational meaning across multiple timescales, from short phrases to full narratives.

-

While brief segments rely on shared brain regions, others engage different systems for speaking and listening.

-

These findings explain how people keep track of conversations and shift fluidly between roles.

“Happy talk,

Keep talkin’ happy talk,

Talk about things you’d like to do.”

These lyrics from South Pacific hint at something deeply human: Our lives unfold through talk.

Our conversations give form to our thoughts and tie us to one another. But beneath the surface of every spoken exchange lies a complex neural process, one that shapes how we create and interpret meaning together.

A new study published in Nature Human Behaviour reveals that the brain organizes this exchange by adapting to the timescale of the conversation. At shorter intervals, the brain uses overlapping systems for both speaking and listening. But as the dialogue stretches into full thoughts or stories, speaking and listening begin to rely on distinct processes. This layered structure helps explain how people carry out fluid, responsive conversations.

How the Brain Follows Conversations

To explore the inner mechanics of dialogue, researchers in Japan invited pairs of individuals to engage in unscripted conversation while lying in separate scanners, speaking through headphones and microphones. Their goal was not to study isolated words or scripted exchanges, but the fluid, spontaneous rhythms of how human communication unfolds in daily life.

The researchers segmented each conversation into varying lengths, from fleeting phrases to full narrative arcs. They then examined how the brain responded to these different timescales. During short exchanges, the same neural systems were active whether a person was speaking or listening. It seemed that, in the early moments of a conversation, both parties relied on a shared set of circuits to manage the rapid flow of words. However, as the conversation deepened and the timescale lengthened, the brain began to diverge in its treatment of each role.

Listening, in particular, was more demanding. As stories unfolded into complex ideas, listeners recruited a broader set of brain regions involved in memory retrieval, sustained attention, and social cognition. These included areas like the angular gyrus and posterior cingulate cortex, which help link incoming language to stored knowledge, and the medial prefrontal cortex, which supports imagining other people’s thoughts and intentions.

These networks allowed the listener not only to absorb the speaker’s words but to track their meaning over time, integrate it with prior knowledge, and infer intention. Speaking did not require the same level of integration. It remained more localized, focused on generating language and responding to immediate context. This involved regions like Broca’s area in the left frontal lobe, which helps plan speech, and nearby motor areas responsible for controlling the muscles used in speaking.

article continues after advertisement

In this asymmetry lies a profound insight. To speak is to project thought outward, but to listen is to reconstruct another person’s inner world. It is no surprise, then, that the brain allocates its deepest resources to the act of listening.

Why Speaking and Listening Feel So Different

To uncover how this works, the researchers constructed computational models capable of predicting whether a person was speaking or listening based solely on their brain activity.

Even the smallest acknowledgments, like “right,” “uh-huh,” and “you know,” elicit stable patterns in the brain. These fragments serve a subtle but vital purpose. They signal presence, mark engagement, and keep the rhythm of dialogue intact. In doing so, they reflect the fundamentally social nature of language: We do not speak into a void, but to be heard, understood, and affirmed.

As conversations become emotionally charged or intellectually complex, the gap between speaker and listener widens. The listener, more than the speaker, must navigate shifting layers of meaning. This involves not only cognitive effort, but emotional attunement.

Brain areas like the anterior insula and amygdala become more active during emotionally rich moments, helping the listener register tone and affect. Other regions, such as the temporoparietal junction, help track the speaker’s perspective, allowing the listener to imagine what the speaker might be feeling or intending. To listen well is to hold another person’s experience in mind, to mirror their emotions without losing oneself.

A Brain Designed for Dialogue

Conversation is more than the exchange of words. It is a layered, time-dependent process involving memory, emotion, attention, and the ability to switch between speaker and listener. The brain makes this possible by drawing on flexible systems: some geared for rapid responses, others tuned for extended stretches of meaning.

article continues after advertisement

What emerges is a brain finely shaped for connection. As South Pacific reminds us, “Happy talk, keep talkin’ happy talk.” The complex choreography within the brain allows us not only to speak, but to understand and be understood.

References

Yamashita, M., Kubo, R., & Nishimoto, S. (2025). Conversational content is organized across multiple timescales in the brain. Nature Human Behaviour, 1-13.

About the Author

William A. Haseltine, Ph.D., is known for his pioneering work on cancer, HIV/AIDS, and genomics. He is Chair and President of the global health think tank Access Health International. His recent books include My Lifelong Fight Against Disease.

Online: