Post Content

2025 Election Results

This Is What Standing Up to Dictators Looks Like

In authoritarian regimes, silence is safety—and speech is rebellion. In this powerful episode of Radio Atlantic, dissidents Garry Kasparov and Masih Alinejad reflect on the personal cost of speaking out, and the urgent need to keep telling the truth, even in exile.

What is a dissident? In an autocracy, standing against the rulers could mean harassment, prison, torture, even death. Dissidents stand up anyway.

Host Garry Kasparov is joined by Masih Alinejad, whose work for women’s rights against the Islamic Republic of Iran has led to her exile in the United States. It has not ended her fight, nor has distance made her safe—she was targeted for assassination at her Brooklyn home. Masih and Garry discuss their joint work in fighting autocrats worldwide, and the importance of safeguarding the values of democracy before it’s too late.

The following is a transcript of the episode:

Garry Kasparov: What is a dissident? In an autocracy, the line is brightly drawn. The ruling authority is unjust. The people have no legitimate voice in their destiny or that of the nation. Standing against the rulers could mean harassment, prison, torture, even death. Dissidents stand up anyway.

If that is too long a definition, here’s another one: A dissident is Masih Alinejad. She’s my friend and the guest in today’s episode. Her brave stand for women’s rights against the Islamic Republic of Iran has led to her exile in the United States, but it has not ended her fight, nor has distance made her safe. She was targeted for assassination at her Brooklyn home. But the would-be killers were captured and recently convicted in a New York City court. Her story teaches us to value what we have and to never take our rights—or our safety—for granted.

From The Atlantic, this is Autocracy in America. I’m Garry Kasparov.

Since the Cold War ended—and with it, the categorical good and evil contrasts it contained—many people lucky enough to have been born in a free country, especially America, have begun to forget how lucky they are.

Of course, many Americans have started thinking about their freedoms a lot these days, but not for the reasons I would’ve hoped. They’re seeing with their own eyes some of the early warning signs that dissidents in unfree countries know too well. I’ve always believed that if you stop caring about freedom everywhere, you won’t have it at home for long. The moral relativism of the post–Cold War era has come home to roost.

So it’s time to organize and time to fight, and there’s no one who can inspire and teach us how to do those things better than Masih Alinejad.

Hello, Masih. So good to see you.

Masih Alinejad: Always good to see you, Garry. You are my brother-in-arms.

Kasparov: You are my sister-in-arms. So where do we start? There’s so much I want to talk to you about. Okay. My late friend and ally Boris Nemtsov—former deputy prime minister of Russia, at one point considered to be [Boris] Yeltsin’s successor, when we worked in the opposition trying to stop [Vladimir] Putin’s dictatorship—he used to say that in the absence of democratic procedures, when you live in the authoritarian regime, the only way to measure the effectiveness of your work is how the regime responds to it. And judging by the response of the Iranian dictatorship, religious dictatorship to you—assassination attempts, kidnapping attempt—it seems you are No. 1 on their hit list, enemy No. 1 of Iranian mullahs. So how come the regime that every day, every hour demonstrates contempt for women is so afraid of you?

Alinejad: To be honest, it’s a badge of honor. Garry, I was on the phone with you when actually the guy with the AK-47 came in front of my house in Brooklyn. We were on a Zoom meeting with our friend Leopoldo López, and it was a very tense meeting, if you remember. So I didn’t open the door. So basically, you saved my life. I could have been dead. The regime, whatever I do, it made them mad and very angry with me, and they hate me so much that they really wanna get rid of me.

Sometimes I say to myself: Is it worse? Like, first kidnapping plot, and then the guy got arrested with AK-47. I thought, It’s done. That’s it. And then two more men, a few days after a presidential election here in the United States of America, got arrested. So, but, but think about it.

Kasparov: I’m just, I’m thinking about it. But you know, it’s just, our listeners should understand, so. Why so serious? Why you? Why these dictators are so scared of dissidents that have nothing but Instagram, Twitter, X, and just the power of words?

Alinejad: That’s a very good question, but I think we should not downplay the power of women in Iran. Yes, of course. There are three pillars that the Islamic Republic, based on three pillars: death to America, death to Israel. And the third pillar is women. So I strongly believe the reason that they really hate me and they want to kill me, it’s because I know how to mobilize women. So I remember the day when I started my campaign against compulsory veiling, I was myself shocked how I got bombarded by women: young women inside Iran sending me videos of themselves walking unveiled, which is a punishable crime. Garry, it’s like if you walk unveiled, you get fined, you get lashes, you get killed. But women were practicing their civil disobedience. So it was not just about a small piece of cloth. When women can say no to those who control their body, these women can say no to dictators. And that scares the regime, because right after the Islamic Revolution, the Islamic Republic actually forced the whole, you know, half of the population to cover themselves. Why? Because compulsive veiling is the main symbol of a religious dictatorship. It’s their, you know—we women are forced to carry their ideology. If we say No, no longer are we gonna carry your ideology, of course they hate us.

Kasparov: Okay. Let’s go a bit deeper in history. So let’s start with you in Iran. You had been working in Iran, and you were critical of the regime.

Alinejad: I was a parliamentary journalist.

Kasparov: You are the parliamentary journalist.

Alinejad: I got kicked out from the Iranian parliament just because of exposing their payslips.

Kasparov: Oh, okay. Fine. So, when did you leave Iran?

Alinejad: In 2009. The presidential election happened, controversial presidential election. They stole the—

Kasparov: You call it controversial.

Alinejad: It was—actually, I call it selection, Garry. We don’t have elections in any authoritarian regimes at all.

Kasparov: I do know that.

Alinejad: It was a selection, but at the same time I had hope. I have to confess that. I had hope that this regime can be reformed. So millions of Iranians, they had hope that we can reform the regime. So we try—

Kasparov: By voting?

Alinejad: By voting.

Kasparov: By voting for so-called reformers?

Alinejad: So-called reformers. We tried that; we tried that many times. It didn’t work, and that’s why, I mean, me and many people who believed in reform, Green Movement, they left Iran.

Kasparov: So there was the election.

Alinejad: They stole the election.

Kasparov: It seemed that the reformer won. And then it was, they call it the Green Revolution, but the world ignored it. President [Barack] Obama turned a blind eye on it.

Alinejad: Not only that, President Obama found an opportunity that, Wow, the regime is weak. So then he could get a deal from the ayatollahs, and guess what? I’d never forget the time when people were chanting Obama, Obama. You either with us or with them. You know why, Garry? Because Obama in Persian means “he is with us.” Oo means “he”; ba means “with”; ma means “us.”

Kasparov: Wow. I know. So you left Iran, because I always remember when I left Russia, so, and decided not to come back because I was already part of this ongoing criminal investigation about political activities. What happened with you, 2009? Any specific, you know, reason? Of course you were treated with at least suspicion by the mullahs and by their henchmen. But anything else happened in 2009 so that you sensed it’s time to leave?

Alinejad: In 2009, Garry, I didn’t, I didn’t make the decision to leave my country. I came here because I was invited by Obama’s administration to do an interview with President Obama. When I came here, the Green Movement happened, and the administration got cold feet. Because they told me if they give the interview to me—I was working for the reformist newspaper, which was, which belonged to one of the presidential challengers—so they thought that because we are supporting the Green Movement, if they give me the interview, the U.S. will send the signal to the regime in Iran that the United States of America is supporting the Green Movement. You tell me: What is wrong if a democratic country supports a pro-democracy movement?

Kasparov: It’s amazing. It’s such an easy way to send a subtle signal without a direct offer of support to the movement by just giving an interview. And they just turn you down?

Alinejad: Basically, Obama ruined my life, because I was here, I couldn’t get the interview, and I didn’t know what to do.

Kasparov: And what did come next?

Alinejad: Nothing. I couldn’t go back, because the Iranian regime shut down the newspaper that I worked for; they arrested thousands of innocent protestors. They killed more than 100 innocent people in the Green Movement. And I was telling President Obama: If I get the interview, I’m gonna go back. Because they’re not gonna touch me, because the U.S. government is going to actually put pressure on them. But I didn’t get the interview. And, I mean, my heart was broken. Because I think there was nothing wrong by sending a signal to the regime by giving an interview to a pro-democracy journalist and saying that, “Yes, we proudly support the Green Movement. We proudly support the innocent people of Iran.”

After, uh, I think eight years, I saw—six years?—I saw Hillary Clinton in a party, and I kind of grilled her. I said, I’m here because Obama’s administration never accepted to give me the interview. Now I lost my country. I am stuck here in America. And I said that basically, I don’t want you to help us or to save Iranians. I want you to at least stop saving the Islamic Republic. That was my point. And what happened? Hillary Clinton, I have to give her the credit. She actually went public. After that, she said that big regret, the Obama administration should have supported the [movement]. Obama, recently, after, you know, the 2022 uprising—woman, life, freedom—President Obama himself said big regret. But at what cost? A lot of people got killed. After 10 years, President Obama said Yes, we should have supported the Green Movement. It is, it is beyond sad that leaders of the free world do not understand that they have to stick with their principle. Instead of just empty condemnations or empty words of solidarity or supporting, they have to put principle into actions.

Kasparov: I’m a bit hesitant asking you this very tough question, because, you know, it’s, also yes, very close to my heart. When I left, I was, I just decided not to come back to Russia to face imminent arrest. So I didn’t think that it would be for such a long period, and maybe again, it’s now, it’s indefinite. I’m not sure I ever would be able to come back. I still hope that, you know, I’m young enough, you know, just to see the change in Russia. About you. It’s not, it’s not 12 years; it’s 16 years. So when did you leave, or when you decided not to come back, when you realized that, you know, this return to Iran would be—

Alinejad: A dream.

Kasparov: —just an instant arrest or worse? So what did you feel?

Alinejad: Sometimes I really feel miserable, Garry. I have to—you, you are my brother, and I have to admit that. I’m an emotional person.

Kasparov: I know that. You are in good company.

Alinejad: Yeah, and sometimes I think that just because having a different opinion—wanting democracy, dignity, freedom—I have to pay such huge price of not hugging my mother.

Kasparov: You still have family there?

Alinejad: Yeah. My mom lives in a small village. She doesn’t even know how to use social media. So, when my brothers, or you know, my family, when they go there to visit her, this is just an opportunity I can talk to her. But guess what? Now, talking to me is a crime. The Iranian regime created a law under my name. If anyone sends videos to Masih Alinejad, or talks to Masih Alinejad, will be charged up to 10 years in prison. So they implicated my mother for the crime of sharing her love with me.

And now my mom cannot talk to me. And now my brother—like, my family, should be careful. If they talk to me, they have to pay a huge price. You see, I have family. But it’s like I don’t have them. Why? Because I want freedom, because I want democracy, and that’s my crime. Sometimes I think that I won’t be even able to hug my mother. I forget their faces, I wanna hug them. I wanna touch my mom’s face, my father’s face. And guess what, Garry? Because of all these traumas, because of all these, it’s not easy to handle them. So I planted trees in my Brooklyn garden to honor my mother, to honor my father. So I named a tree, cherry blossom tree, after my mom’s name in my Brooklyn garden.

And now I’m not even able to see those cherry blossom trees, because I had to move. I mean, in three years, the FBI moved me more than 21 times. Dictators first forced me to leave my mom, and now being away from my cherry-blossom mother. It was a beautiful tree. My father, so, because he, you know, he disagreed with my ideas, I planted a peach tree, and I put it in the backyard garden. I don’t wanna see you, but be there, because I love you.

Kasparov: You just said that your father disagreed with you. So you have your family not on one side. It’s split.

Alinejad: Yeah. It’s like Iran. You know, on the map we have one country: Islamic Republic of Iran. But in reality, we really have two Irans. It’s like we are banned from going to stadium. Women are banned from dancing. Women are banned from singing, Garry. From singing. So women and men are banned from having a mixed party. So we are banned from a lot of things by the ayatollahs. Yeah. So, but Iranians are brave enough to practice their civil disobedience, to create their own Iran. So I try to give voice to the real Iran, trying to show the rest of the world that this is a barbaric regime.

When you go to my social media, you see the true face of Iranian women, brave people of Iran. You see the face of mothers whose children got killed, but they bravely shared their stories. I never forget the day when the head of the Revolutionary Court created a law saying that anyone sent videos to Masih would be charged up to 10 years in prison.

So I shared this video, because I wanted to let my people know about the risk. Guess what, Garry? I was bombarded by videos. This time, from mothers whose children got killed by the regime walking on the same street that their children got killed. Holding their picture and saying, Hi, Masih. This is the picture of my son, and I am in the street where my son got killed. I rather go to prison, but not be quiet. Be my voice. This is the Iran that I’m proud of. So these women are like women of suffrage, like, you know—like women, like Rosa Parks of my country. So that’s why I use my social media. To echo their voices, to continue my fight against the Islamic Republic. As I told you, they kicked me out from Iran, but they couldn’t kick me out—like my, my mind, my heart, my soul, my thoughts are there. And I’m still fighting with them.

Kasparov: We’ll be right back.

[Break]

Kasparov: You mentioned Rosa Parks. One of the heroes of human-rights movements. All Americans who wanted to fight for equal rights for their compatriots, no matter the religious, racial, or ethnic differences. But that’s, I think where, you know, we can lose our audience here. And Americans, because they always try to see that it’s through the same lens. Yes, yes. It’s heroic. Yes. It’s difficult. And look what we did. We should explain to them that it’s not the same, because all levels of power that are on the other side. We have no—no courts can actually save us in Russia or in Iran, or in Venezuela. So facing the obstacles in our part of the world is very different that, of course, facing the obstacles in the free world, whether it’s 60 years ago or now, but you know, this kind of hypocrisy, you know, I think it’s just, it’s—yeah. Yeah.

Alinejad: It breaks my, yeah.

Kasparov: I look, yes, I look at, at the smile on your face. Yes, of course, you know that. But I think it’s very important for people to understand, while, you know, we all can appreciate the activities of Me Too—yes, there are many things that, words you can, you can, right the wrongs. But this is not the same as as women’s situation in Iran, or even worse in Afghanistan. So let’s talk about it. Let’s talk about, you know—this is very different treatment of human rights in the United States or European democracies versus the rest of the world, where somehow we hear even from those who are fighting for, you know, publicly here for the values of equality—just the racial equality, gender equality, whatever. But somehow they become very shy talking about Iran, Afghanistan, or other dictatorships. And they even talk about some kind of, Oh, it’s, just their culture. Answer them.

Alinejad: You called it hypocrisy. Garry—

Kasparov: I’m trying to be diplomatic. I’m the host of the show.

Alinejad: I call it—absolutely betrayal. Not only to human rights and women’s rights, values. But also, it’s a betrayal to their own sisters in Afghanistan, in Iran. Let me just tell you why I call that the biggest enemy of the women in Iran and Afghanistan, unfortunately, are the Western feminists. And I’m telling you why. I’m telling you why.

When I was fighting against compulsory veiling, in America, when I launched my campaign against compulsory hijab, when I came to America, I saw the Women’s March taking place in America. I was so excited when people here were chanting My body, my choice. And I was marching with them. Oh, Garry, you have to see my video. I was, like, so excited, putting a headscarf on a stick and chanting My body, my choice. People were replying Her body, her choice. And I thought, This is the America. I called my friend in Iran, and I said, “This is the first time I’m demonstrating, I’m protesting—no one killing me, no one arresting me.” It was shocking for me that like, looking around, the police—

Kasparov: Police protecting you.

Alinejad: —protecting me to chant My body, my choice. I got arrested by morality police in my country. I was imprisoned by police in my country. I was beaten up by morality police in my country. When I was pregnant, I got arrested, and I was in prison. So when seeing the police in America, protecting me chanting My body, my choice, I was crying out of joy.

I reach out to the same Women’s March people. And I said to them, Now it’s time to support the women of Iran, to fight against the Islamic Republic, the ayatollahs. Iranian women say no to forced hijab. They all were like, Shhhh. I was being labeled that I cause Islamophobia. Why? Because they always say that, Um, that’s your culture. You know, cultural relativism became a tool: an excuse in their hand to support the ayatollahs to oppress women more. I’m saying that. Using all these narratives to actually send a signal to Islamic Republic that whatever you do, we don’t care. So what breaks my heart. When Boko Haram, actually—

Kasparov: Let’s clarify. Boko Haram—Islamist terrorist organization in Nigeria that had a very bloody record of prosecuting Christians in the country. And of course, their first target is girls.

Alinejad: Exactly. What happened? Michelle Obama, and Oprah [Winfrey], Hillary Clinton, a lot of Western feminists, they supported a campaign: Bring Our Girls Back. Beautiful. Where are they? Where are the Western feminists? Why there is no Women’s March for women of Afghanistan? The situation of women in Afghanistan is exactly like The Handmaid’s Tale, which is a fiction. People in the West, buying popcorn, sitting in their sofa, watching The Handmaid’s Tale—fiction. Your fiction is our reality. It is happening right now. The apartheid against women is happening, but when this is in The Handmaid’s Tale, it’s bunch of like white women, so being denied their rights, being raped, forced to bring children, all wearing same dress code. This is the situation in Iran. This is the situation in Afghanistan.

So for me, when I don’t see women marching in university campuses here, college campus here. I’m like, This is hypocrisy. And when it comes to having policy against terrorism, one day Obama’s administration comes and goes, and then [Joe] Biden administration comes and goes. [Donald] Trump administration comes and goes. And they undo all the policy of the other president. They don’t understand that when it comes to terrorism, America should have only one policy. Believe me, the Islamic Republic—they don’t care whether Trump is in power or Biden is in power. They don’t care about left and right wing. They hate America. They hate American values, and that is what is missing. The American government does not understand that they don’t have one policy to end terrorism. That’s why, Garry, I think Americans should understand when it comes to end terrorism, it’s like: Islamic Republic is like a cancer. If you don’t end cancer, cancer will end you.

Kasparov: You don’t, you don’t negotiate with cancer. You cut it off. Yes.

Alinejad: You cut it off.

Kasparov: I agree. That’s what I’ve been saying about Putin. You enjoy the certain protection offered by American law. And those who tried to kill you and to kidnap you, they faced American law, and they have been convicted. America defended you. Yeah. And America forced them, you know, just to receive the prize they deserved.

Alinejad: Mm-hmm.

Kasparov: So you were on the court, in the courtroom?

Alinejad: Oh yes.

Kasparov: You looked, you looked straight in the eyes.

Alinejad: I faced my would-be assassins. I looked into their eyes. I’m not saying that it was not scary, Garry. I was bombarded by different feelings, different emotional, looking into their eyes.

Kasparov: You were trembling.

Alinejad: I was like crying, back door, in the arms of the FBI agents who were protecting me. But immediately when I walk into the room, when I saw there was a female judge, I was like, This is what we are fighting in—I’m emotional—this is what we are fighting in Iran. Having a female judge in America, having the law enforcement sitting there, supporting me. I saw my friends, human-rights activists. I saw my neighbors, Garry, my neighbors from Brooklyn, and I was like, How lucky I am. This is what the Iranian people want to have. Justice. This is, this is like, this is the beauty of America. And I was like—felt the power. To look into their eyes and testify against the killers.

Kasparov: Now, having all these experiences, do you think that America is in any danger of sliding into the authoritarian direction? Do you think that Americans take this freedom for granted? Because you have plenty of experience, you know, both as an American citizen, as one of the leaders of the global dissident movement. Is America facing the real challenge of fundamental freedoms that Americans enjoyed over generations, for 250 years—they could be somehow in jeopardy?

Alinejad: Of course, democracy is fragile. I want Americans to understand that when you take freedom for granted, democracy for granted, when you take like, you know, everything for granted—think about it, that the authoritarian regimes are not gonna just stay there. They’re coming from different geography, different ideology, from communism to Islamism. But they have one thing in common: crushing democracy, hating America. And all the authoritarian regimes, Garry, you know better than me: They work together. They cooperate together. Why? Because they know how to support each other. They know how to back each other. But here in America, Republican and Democrats, when it comes to supporting the national security of America in the face of terrorism, they’re not together. So when they are not united, believe me, dictators will get united, and they will end democracy.

Kasparov: Now it’s time to talk about, you know, our joint efforts to create a global dissident organization. And now it’s the World Liberty Congress. And you are the president, the elected president, by the way.

Alinejad: As a woman, I cannot even choose my dress code in Iran, but I was elected!

Kasparov: Exactly. Let’s talk about it, about the concept, because we talked about human-rights abuses in Iran, Afghanistan. Briefly mentioned Russia and other places. So you talked very passionately about the dictators working together. Russia, China, Iran, North Korea. They worked together, not just in the United Nations.

Talk to our Ukrainian friends. And they tell you: They are working together, helping Putin to conduct this criminal, genocidal war in Ukraine. The free world is, I wouldn’t say disunited, but definitely is not united as it had to be. So we try to bring together dissidents who saw it just with their own eyes, who suffered from these power abuses. Whether it’s in Africa, it’s Middle East, in Latin America, it’s in Asia, it’s Eastern Europe, Russia, Belarus, central Asia. Unfortunately, there are too many countries that just now are living now under some kind of authoritarian or totalitarian rule. So we created this organization, and we want to have a powerful message of these combined forces of people who otherwise, you know, had little in common. But recognizing that it’s time for us to have a dissident international—to do what?

Alinejad: I’m sure you’re not gonna like that, but the only thing that we should learn from dictators is unity. Because you said that: They are united. So our organization is trying to actually teach the leaders of democracies that they have to be as united as dictators. And work together when it comes to end authoritarianism—which is, as you said, increasing every year. And we had our first general assembly in Lithuania. These are the true dissidents, who survived assassination plots, leading movements within their own country in Africa, all over the authoritarian regimes. So we need to get together and bring the wall of dictatorship down. Otherwise, democracy is going to go in recession forever. So I wanna invite everyone to actually learn about the World Liberty Congress and our joint efforts—and understand that this is the time to support the dissidents who are warning the rest of the world that dictators are expanding their ideology everywhere. In democracies as well.

Kasparov: Yes. So, of course I have to mention Anne Applebaum, who started this concept, Autocracy in America. She talked about it in a very scientific way. So obviously you are, you are offering more emotional—actually firsthand experience.

Alinejad: Firsthand experience, not emotional, Garry. Let me tell you something. The guy who was trying to kill me was from Russia. A Russian mobster, yeah? And the kidnapping plot as the FBI, you know, foiled it—

Kasparov: Revealed it.

Alinejad: Yes, exactly. When you read the indictment, they say that they were trying to take me from Brooklyn to Venezuela. Why Venezuela?

Kasparov: It’s a part of the same network.

Alinejad: Exactly. Yes. So that actually shows you this network: from Russia, Iran, Venezuela, China, North Korea. They’re not only supporting each other—like sharing technology, surveillance within their own authoritarian regime to oppress and suppress uprising. They are also using this for transnational repression beyond their own borders, in democratic countries. In 40 years, more than 500 non-Iranians were the target of the Islamic Republic, either kidnapping or assassination plots. More than 500—beyond their own borders in Western countries. That should be an alarm for everyone.

Kasparov: But, we can hardly expect Western democracies, especially the United States now and Donald Trump’s leadership, to incorporate dissidents’ concerns, human-rights issues, into any negotiations. He spoke to Vladimir Putin just a number of times. I never heard them talking about human rights.

Alinejad: So if they don’t care about human rights, I think national security is important for them, no? National security is under threat. Serious threats. I am talking about real assassination plots taking place on U.S. soil. If anyone can come to America and target me, next can be anyone who is now listening to me.

Kasparov: Let’s summarize. In the era of globalization, democracy cannot survive somewhere without being protected elsewhere. So everything is interdependent. It’s all connected, correct? So, what is our message? The World Liberty Congress brought together hundreds and hundreds of dissidents, because we understand that the world now, it’s now on one of the most critical stages of the never-ending war between forces of freedom and tyranny. And this war, of course it has front lines, like in Ukraine, for instance. But it goes across the globe. And this war also has its invisible borders inside the United States, inside Europe: so inside democratic countries. And here, our experience, our understanding of the nature of this war, is invaluable. People should listen to us. And eliminating human rights—or accepting the equality of people from every region of the planet, for just that they’re entitled for the same rights as Americans or Canadians or Brits or French or Germans—is going to harm democratic institutions in these very countries. Your last word?

Alinejad: My last word.

Kasparov: Your last word today, of course. Because we will hear a lot from you.

Alinejad: Yes. Some people in America are allergic to regime change.

Kasparov: To the word of regime change.

Alinejad: To the expression of regime change. I’m only allergic to dictators, and that’s how it should be. Don’t give diplomatic titles to terrorists. Let’s call them who they are. Don’t give diplomatic titles to dictators. They are dictators. So that’s my message. Very simple. Hashtag diplomacy is not going to save the lives of women in Iran, in Afghanistan. The lives of those people living on their authoritarian regimes in Africa, in Latin America, Asia, Eastern Europe.

No; we need actions. We need the real solidarity, and don’t abandon those who are protecting democracy, who are fighting for freedom, who are trying to guarantee global security across the globe. I love America. I love Iran. And I’ve been given a second life, by the law enforcement. Garry, this is very ironic—a girl who was forced to shout “Death to America.” The country that I wish death for, the United States of America, gave me a second life. And that’s why I love America, and I wanna dedicate my life to fight for America as well: to protect America from terrorists, from authoritarianism. And that’s why I am full of hope and energy.

[Music]

Kasparov: When Masih and I spoke, it was before the United States and Israel attacked Iran. So we followed with Masih: to ask her what she made of the strikes, and what they might mean in the battle against the Iranian regime and the broader fight against autocracy. Here is what she had to say:

Alinejad: To be honest, I am in touch with many Iranians, and they are happy when it comes to see the end of their killers, the commanders, the Revolutionary Guard members. So that made Iranian people happy. But at the same time, ordinary people got killed. And that’s the people of Iran paying a huge price. And what breaks my heart more—that now people are being left alone with a wounded regime, which is trying to get revenge on its own people.

So yes, I kept hearing in the West, Let’s end the war. Anti-war activists took to the streets, and I was like, It is not that difficult for you to say that. And when now I see that all those anti-war activists, you know, they just finished their job. No more talking about another war being waged on Iranian innocent women. People facing executions right now. It is beyond sad.

[Music]

That’s all I can say. That we only see peace and security in the region, across the globe, if we really say no to Islamic Republic. If you ask Iranians, they have only one message to you: The real warmongers are the Islamic Republic officials inside the country. And that’s why when we say no to war, we really mean no to the Islamic Republic.

Kasparov: This episode of Autocracy in America was produced by Arlene Arevalo and Natalie Brennan. Our editor is Dave Shaw. Original music and mix by Rob Smierciak. Fact-checking by Ena Alvarado. Special thanks to Polina Kasparova and Mig Greengard. Claudine Ebeid is the executive producer of Atlantic audio. Andrea Valdez is our managing editor. Next time on Autocracy in America:

John Bolton: This virus of isolationism—which isn’t a coherent ideology itself; it’s a knee-jerk reaction to the external world—can go through a long period of being irrelevant and then suddenly reappear. And I attribute this, in part, to a failure in both political parties ever since the collapse of the Soviet Union.

Kasparov: I’m Garry Kasparov. See you back here next week.

[Music out]

Dive into Genealogy

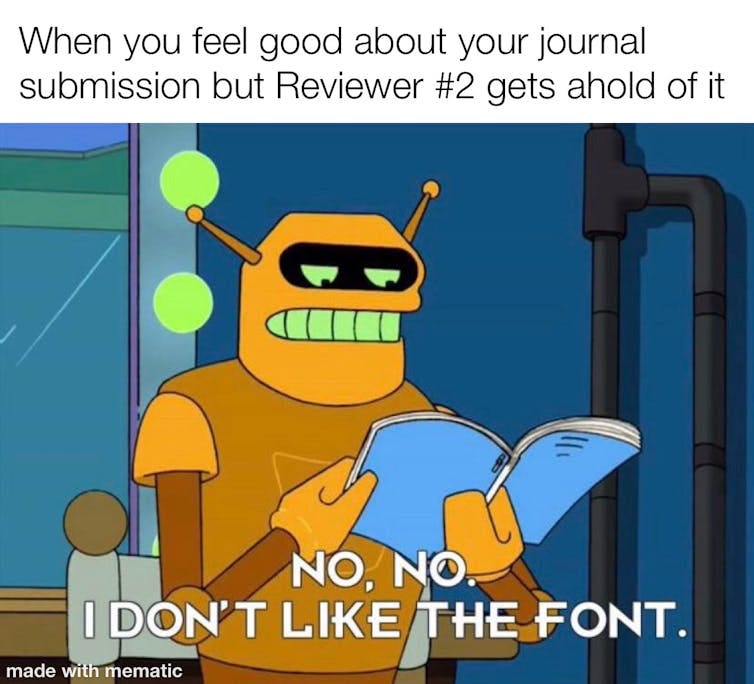

Ronald Reagan on Eureka College Swim Team Diving from Diving Board in Eureka, Illinois, 1928-32, National Archives Identifier 75857021

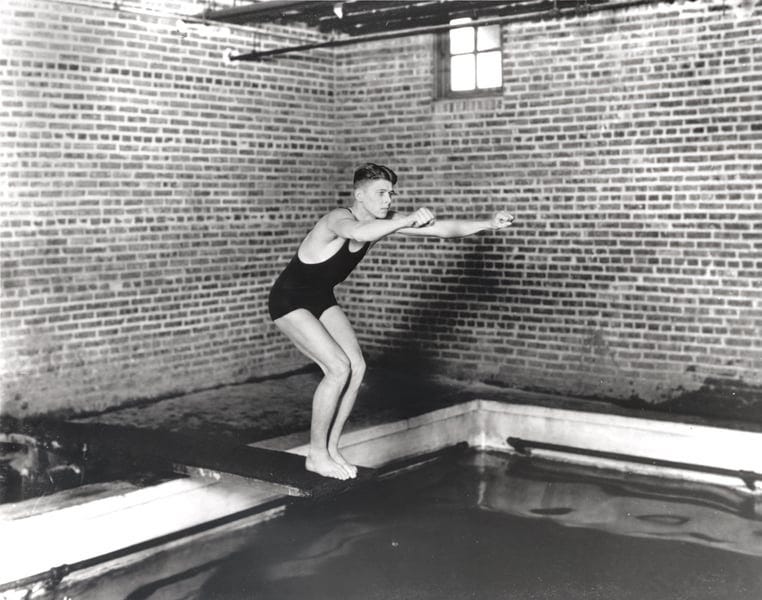

From the Territory of Montana to the Republic of Vietnam: Researching Native American Veterans in the National Archives, 1881–1966

Native Americans have a long and distinguished history of service in the United States Armed Forces. Using a host of records from across National Archives facilities, this talk will explore how National Archives records can be used to reconstruct their lives and

service, using case studies from the Indian Wars, World War II, and the Vietnam Conflict.

Photograph of Navajo Code Talkers Serving with a Marine Signal Unit, National Archives Identifier 100378141

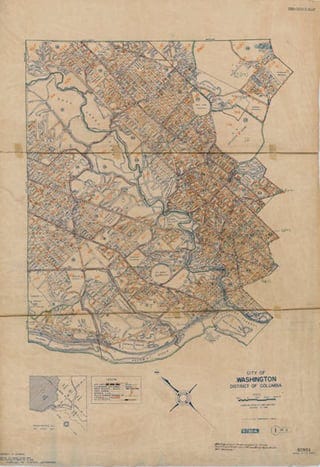

Mapping the 1950 Census: Census Enumeration District Maps at the National Archives

A census enumeration district was an area that could be covered by a single enumerator, or census taker, in one census period. Enumeration districts varied in size from several city blocks in densely populated urban areas to an entire county in sparsely populated rural areas. This presentation will focus on locating and using census enumeration district maps, with an emphasis on maps from the 1950 census.

1950 Census Enumeration District Maps – Washington, District of Columbia (DC) – Washington – Washington DC – ED 1-1 to 1295, National Archives Identifier 18655909

Video Presentation slides/handout

Passport Records: Passport Applications at NARA, 1790s–1925

Passports are documents that prove a person’s identity and citizenship and have been required for most foreign travel since 1941. This lecture will discuss the genealogical value of U.S. passport applications and related records, 1795–1925, that are held by the National Archives and Records Administration, and will focus on records that are available online.

Video Presentation slides and handout

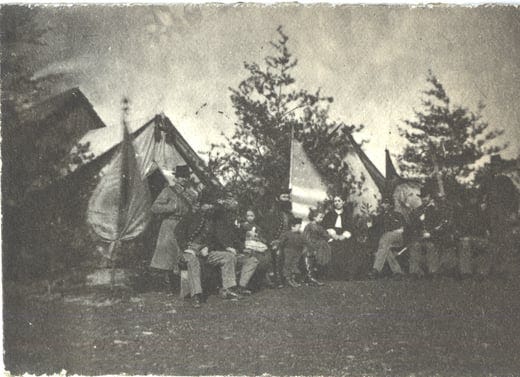

Civilians at War: Records of Participation in U.S. Military Conflicts

This presentation discusses ways in which civilians supported a war or were directly affected by it, with a focus on the American Revolution to World War I. These wars provided opportunities for employment by civilian or military agencies to provide

goods, services, or loans. Other individuals sought reimbursement after suffering property loss. We’ll show examples of online records that document these relationships with the federal government (ca. 1776–1918) and Confederate States government (1861–1865).

Camp Scene, Group of Officers and Ladies, National Archives Identifier 167247176

Video Presentation slides and handout

Alien Files (A-Files): Researching Immigrant Ancestors at the National Archives

Learn about the Alien Files (A-Files), a rich source of biographical information for family research. The A-Files contain United States immigrant documents generated and collected since the mid-20th century with a wealth of data, including visas, photographs, applications, correspondence, and more. Participants in this session will understand who should have an A-File, discover online search methods to determine whether records are available at the National Archives, and gain the skills to successfully place a request.

Video Presentation slides/handout

Basic Military Records at the National Archives: Revolutionary War to 1917

This presentation outlines basic military records held at the National Archives Building in Washington, DC. The records cover the “Old Military” period from the Revolutionary War to 1917 and are characterized by different types of service, including volunteer service (state regiments and militias) as well as the Regular military (Army, Navy, and Marine Corps). Each type of service was documented differently, but there are also basic records common to all types of service.

Video Presentation slides and handout

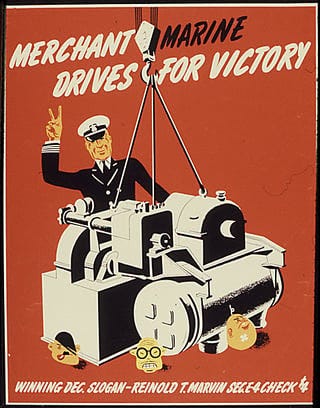

Merchant Marine Records at the National Archives at St. Louis

The National Archives and Records Administration (NARA) recently accessioned the core collection of Merchant Marine Licensing Files, which are now open to the public for the first time at the National Archives at St. Louis. Theresa Fitzgerald will discuss these holdings as well as our auxiliary collections of Merchant Marine records that are complex and closely connected.

Merchant marine drives for victory, National Archives Identifier 535188

Video Presentation Slides/Handout

It is important to preserve your own family records and share those stories. In these Genealogy Series presentations, National Archives experts give advice on how to preserve, protect, and respond to emergencies.

Planning, Techniques, and Strategies for Preserving Family Collections and Stories

Learn how professionals preserve records with surveys to create a plan, use archival techniques, and select storage strategies. Surveys help you create a plan of action to determine record treatment, housing, and storage. Archival techniques can be used on your own personal collections of paper-based materials, photographs, and objects. Strategies include how to identify storage needs for papers and photographs. We will tie this all together and show you how significant documents and records help to tell family stories.

Video Presentation slides and handout

Disaster Preparedness and Response for Family Collections

Fires, floods, hurricanes, tornadoes, and earthquakes are scary scenarios for those who treasure and maintain their family history. Learn what you can do ahead of time to plan for emergencies and minimize risk to your family heirlooms as well as what to expect to do after a disaster to salvage damaged items.

Preserving and Digitizing Personal Photo Albums and Scrapbooks

Preserving photo albums and scrapbooks can be especially challenging, often because they are bound and contain a variety of problematic materials. This session addresses how to work with challenging materials commonly found in personal scrapbooks and albums, how to maintain the integrity of the arrangement, and how to store photo albums and scrapbooks appropriately. Pro tips for home users include ways to digitize albums, organize electronic files, and preserve them as electronic records. Examples come from both National Archives and personal collections.

Video Presentation Slides and Handout

Tips and Tools for Engaging Family with Your Research Finds

As the family historian, you have amassed information and records that will one day pass to the next family historian. How do you share your findings with others? How to engage young family members involved with all your hard research may be another story. Education staff will demonstrate fun and engaging ways to connect research to your family, including younger family members. This lecture will highlight activities related to our most popular genealogy records, such as Immigrant Ship Arrivals, U.S. Census Records, Naturalization records, and Military and Pension files. The presenters will also demonstrate new ways to share your research finds online, using social media tools.

A Few Exceptions Worth Noting Chicago Manual / October 11, 2022 Updated August 12, 2025

Spotlight on Exceptions

Even the most straightforward rule will be subject to an exception sooner or later. That’s why CMOS qualifies so many of its rules with usually or generally. But some exceptions are so common that they deserve to be called rules themselves.

Let’s examine some of the more notable exceptions in terms of the rules they break.

Seven Rules, Eight Exceptions

The following seven rules—and their exceptions—can all be found in CMOS, either explicitly or by example (and sometimes both). To put Chicago’s rules in perspective, some additional exceptions recommended by other guides are also mentioned where relevant, in the explanations following the examples.

1. Do not add an apostrophe to form a plural.

Exception: Individual letters.

Example 1: There are two l’s and two a’s in the word llama.

Example 2: I got A’s in my science classes but B’s in everything else.

Most of us know that it’s two bananas, not two banana’s. But sometimes an apostrophe clarifies a plural that would otherwise be difficult to read.

In Chicago style, letters used as letters usually get italics, but italics alone are too subtle to differentiate a lowercase letter from its plural ending. Compare “two ls and two as” with the first example above; the apostrophes in the example (l’s and a’s) help to clarify that these aren’t the words Is and (especially) as.

Apostrophes can also be helpful with capital letters, where italics aren’t always used, as with letter grades or in the expression “the three R’s.” The meaning of “three Rs” is clear enough without an apostrophe, but what about “two As”?

The pluralizing apostrophe, which had been dropped as a requirement for capital letters in recent editions of CMOS, is once again Chicago style as of the eighteenth edition (see CMOS 7.15).*

Some style guides also specify apostrophes for the plurals of numbers (1920’s) and for abbreviations in all caps (YMCA’s). In Chicago style, that would be 1920s and YMCAs.†

For more on the apostrophe—which is more commonly used in contractions and possessives (when it’s not acting as a single quotation mark)—go to “Chicago Style Workout 65: Apostrophes” and take the quiz.

2. The words in a direct quotation should reflect the source exactly.

Exception: The capitalization of the first letter of quoted text can be adjusted to suit the syntax of the surrounding sentence.

Example: Regarding copyright, the US Constitution gives Congress the power “to promote the Progress of Science and useful Arts, by securing for limited Times to Authors and Inventors the exclusive Right to their respective Writings and Discoveries.”

In the Constitution itself (art. 1, sec. 8), that opening “to” begins with a capital T—but only because it’s the first word in the eighth of eighteen enumerated powers, each of which begins with the word “To.” Outside the context of the original, the capital T has little significance, and Chicago says that it can be adjusted as needed (see CMOS 12.7, rule 3). (The original capitalization of words like “Progress,” “Science,” and “Arts” is more than circumstantial and is therefore retained.)

Some styles say to bracket any such change (i.e., “[t]o promote . . .”; see CMOS 12.21). Those brackets may help readers find the quoted words in the original more quickly, but any advantage from this intervention (which might be required dozens of times in the typical literary or historical study) is too small to justify making it mandatory outside of certain legal and textual studies.

3. Do not begin a sentence or a heading with a lowercase letter.

Exception: Words like iPhone and eBay.

Example: iPhones can always be found on eBay, even if you’re looking for a newer model.

Though some styles say to apply an initial cap to words like iPhone at the beginning of a sentence or heading, such words already feature a capital letter; they don’t need any extra help from the Shift key. See CMOS 8.155.

4. Do not begin a sentence with a numeral.

Exception: Terms that include a mix of letters and digits.

Example: 7-Eleven is known to many as the home of the Slurpee.

Numerals at the beginning of a sentence can be hard to read, especially in a work that features old-style numbers, many of which look like lowercase letters. In the example below, notice how the number 150 is almost hiding at the beginning of the second sentence (whereas the word “Because” stands out as intended):

The initial capital in a term like “7-Eleven” or “3D” makes this less of a problem, as do the parentheses in a term like “401(k).” So for the eighteenth edition we added such terms as exceptions to the usual rule (see CMOS 9.5).

Four-digit years are also usually recognizable at the beginning of a sentence—especially when old-style numerals aren’t being used—so we now allow those also. But we still advise a workaround as the better option: The year 1937 . . .

5. For spelling, follow Merriam-Webster. If an entry lists two or more spellings, choose the first.

Exception: The Chicago Manual of Style spells copyeditor as one word.

The term was first recorded in the Manual as two words, in the index to the twelfth edition: “Copy editor. See Manuscript editor.” But it was spelled as one word in the thirteenth edition (published in 1982), and we’ve never looked back.

Unlike the verb copyedit, which is listed first in Merriam-Webster (ahead of the two-word form copy edit), the noun copyeditor is a “less common” variant (behind the first-listed two-word form copy editor). But we like how the one-word noun copyeditor is consistent with the first-listed verb form—and with the related nouns copyholder, copywriter, and copyreader. See also CMOS 7.1 and 7.2.

6. Abbreviations form the plural by adding s.

Exception 1: Abbreviations for units of measure, which are invariable in both the metric system and the older imperial system—as in 8 km or 3 in. (not 8 kms or 3 ins.).

Exception 2: Irregular plurals like pp. (pages, sing. p.) and MSS (manuscripts, sing. MS) and plurals of abbreviations that already end in s (e.g., trans., which can be used for one translator or more than one translator).

Plurals are always subject to irregularities; abbreviations are no exception. See CMOS 7.15, 10.59, and 10.73 for more details and examples.

7. Each new paragraph in a book gets a first-line indent.

Exception: The first paragraph in a chapter or section.

This is more of a convention than a rule (though CMOS now mentions it; see paragraph 2.15). In the first ten editions of CMOS, judging by the prefaces, first paragraphs were indented—as in the preface to the 1906 first edition:

Here’s the beginning of the preface to the eighteenth edition (in a screenshot from the PDF file used as the basis of the printed book). Note how the first paragraph (but not the second) begins flush left (the intervening epigraph also begins flush left, as most do):

Most books are designed this way now. It’s a nice distinction that shows how the absence of an indent can be almost as meaningful as an indent. Exceptions should always do this. In the context of rules designed to promote consistency and clarity, any departure should be made with the reader in mind.

CHRONICLE FOR HIGHER EDUCATION

Real Conversations. Smart Solutions. Big-Picture Thinking.

How should colleges respond to rising political pressure? What’s next for the bachelor’s degree? Where does AI fit into the curriculum?

Chronicle Festival 2025 brings answers—and bold new questions—directly to your screen. This free virtual event (Sept. 16–18) features thought leaders and campus executives on what’s working and what’s ahead.

You’ll hear from…

-

Kim Hunter Reed, on how states are confronting access and completion

-

Nancy Gonzalez, on transforming institutional culture at scale

-

Chronicle journalists and experts guiding conversations across enrollment, leadership, workforce readiness, and more.

And takeaway:

How changing demographics and attitudes are reshaping campus culture

Approaches to uphold honesty while integrating emergent technology

Day 3 | A Catalyst for the Work Force

Real-world adaptation in institutional environments serving diverse futures

Register for Free

Plus, when you register, you will gain immediate access to a free Chronicle Festival companion collection containing excerpts from our most popular in-depth newsroom reports. Our Innovation Amid Uncertainty collection aims to provide valuable insights into the key themes of this year’s Festival.

1255 23rd Street, N.W. Washington, D.C. 20037

© 2025 The Chronicle of Higher Education

Privacy Policy |

Unsubscribe or update your email preferences here

What is peer review?

In this clear and accessible explainer for The Conversation, clinical academic Joshua Winowiecki breaks down the peer review process — where anonymous experts evaluate research for quality, rigor, and clarity. While not without flaws, peer review remains a cornerstone of academic credibility. A useful primer for anyone navigating scientific literature, whether in healthcare, education, or beyond.

Reviewer 1: “This manuscript is a timely and important contribution to the field, with clear methodology and compelling results. I recommend publication with only minor revisions.”

Reviewer 2: “This manuscript is deeply flawed. The authors’ conclusions are not supported by data, and key literature is ignored. Major revisions are required before it can be considered.”

These lines could be pulled from almost any editorial decision letter in the world of academic publishing, sent from a journal to a researcher. One review praises the work, while another sees nothing but problems. For scholars, this kind of contradiction is common. Reviewer 2, in particular, has become something of a meme: an anonymous figure often blamed for delays, rejections or cryptic critiques that seem to miss the point.

But those disagreements are part of the peer-review process.

As a clinical nurse specialist, educator and scholar who reviews studies in nursing and health care and teaches others to do so critically as well, I’ve seen how peer review shapes not just what gets published, but what ultimately influences practice.

Peer review is the checkpoint where scientific claims are validated before they are shared with the world. Researchers and scholars submit their findings to academic journals, which invite other scholars with similar expertise – those are the peers – to assess the work. Reviewers look at the way the scholar designed the project, the methods they used and whether their conclusions stand up.

The point of peer review

This process isn’t new. Versions of peer review have been around for centuries. But the modern form – anonymous, structured and managed by journal editors – took hold after World War II. Today, it is central to how scientific publishing works, and nowhere more so than health, nursing and medicine. Research that survives review is more likely to be trusted and acted upon by health care practitioners and their patients.

Millions of research papers move through this process annually, and the number grows every year. The sheer volume means that peer review isn’t just quality control, it’s become a bottleneck, a filter of sorts, and a kind of collective judgment about what counts as credible.

In clinical fields, peer review also has a protective role. Before a study about a new medication, procedure or care model gains traction, it is typically evaluated by others in the field. The point isn’t to punish the authors – it’s to slow things down just enough to critically evaluate the work, catch mistakes, question assumptions and raise red flags. The reviewer’s work doesn’t always get credit, but it often changes what ends up in print.

So, even if you’ve never submitted a paper or read a scientific journal, peer-reviewed science still shows up in your life. It helps shape what treatments are available, what protocols and guidelines your nurse practitioner or physician uses, and what public health advice gets passed along on the news.

This doesn’t mean peer review always works. Plenty of papers get published despite serious limitations. And some of these flawed studies do real harm. But even scholars who complain about the system often still believe in it. In one international survey of medical researchers, a clear majority said they trusted peer-reviewed science, despite frustrations with how slow or inconsistent the process can be.

What actually happens when a paper is reviewed?

Before a manuscript lands in the hands of reviewers, it begins with the researchers themselves. Scientists investigate a question, gather and analyze their data and write up their findings, often with a particular journal in mind that publishes new work in their discipline. Once they submit their paper to the journal, the editorial process begins.

At this point, journal editors send it out to two or three reviewers who have relevant expertise. Reviewers read for clarity, accuracy, originality and usefulness. They offer comments about what’s missing, what needs to be explained more carefully, and whether the findings seem valid. Sometimes the feedback is collegial and helpful. Sometimes it’s not.

Here is where Reviewer 2 enters the lore of academic life. This is the critic who seems especially hard to please, who misreads the argument, or demands rewrites that would reshape the entire project. But even these kinds of reviews serve a purpose. They show how work might be received more broadly. And many times they flag weaknesses the author hadn’t seen.

Review is slow. Most reviewers aren’t paid, with nearly 75% reporting they receive no compensation or formal recognition for their efforts. They do this work on top of their regular clinical, teaching or research responsibilities. And not every editor has the time or capacity to sort through conflicting feedback or to moderate tone. The result is a process that can feel uneven, opaque, and, at times, unfair.

It doesn’t always catch what it is supposed to. Peer review is better at catching sloppy thinking than it is at detecting fraud. If data is fabricated or manipulated, a reviewer may not have the tools, or the time, to figure that out. In recent years, a growing number of published papers have been retracted after concerns about plagiarism or faked results. That trend has shaken confidence in the system and raised questions about what more journals should be doing before publication.

Imperfect but indispensable

Even though the current peer-review system has its shortcomings, most researchers would argue that science is better off than it would be without the level of scrutiny peer review provides. The challenge now is how to make peer review better.

Some journals are experimenting with publishing reviewer comments alongside articles. Other are trying systems where feedback continues after publication. There are also proposals to use artificial intelligence to help flag inconsistencies or potential errors before human reviewers even begin.

These efforts are promising but still in the early stages of development and adoption. For most fields, peer review remains a basic requirement for legitimacy, while some, such as law and high-energy physics, have alternate methods of communicating their findings. Peer review assures a reader that a journal article’s claim has been tested, scrutinized and revised.

Peer review doesn’t guarantee truth. But it does invite challenge, foster transparency, offer reflection and force revision. That’s often where the real work of science begins.

Even if Reviewer 2 still has notes.

![Photograph of a Group of Immigrants Outside a Building on Ellis Island [cropped] Photograph of a Group of Immigrants Outside a Building on Ellis Island [cropped]](https://substackcdn.com/image/fetch/$s_!PIvB!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F276cbfd5-6ab6-4eaf-b3f5-7de5db9ef486_891x313.jpeg)