Check out more History Honors 250 videos at https://www.history.com/250.

The History of the New Year’s Eve Ball Drop

Cultural Expression as Legal Speech

But can we better understand the nature of law itself through a study of culture?

In other words, is there law in culture, and conversely, is there culture in law?

It’s about the forms of expression that shape our political moment and the quieter, everyday forms of expression the law often fails to see. It’s about what we protect, what we silence, and what we misname. In many ways, it is about this newsletter—the need to ground conversations about law and law reform in the lived experiences of ordinary people.

Earlier this year, I had the honor of delivering the Keynote Address at the Twenty-Seventh Annual Conference of the Association for the Study of Law, Culture, and the Humanities, held at Georgetown Law School on June 17–18, 2025.

It was a gathering of scholars, writers, artists, and practitioners who care deeply about the ways law shapes our cultural lives—and the ways culture, in turn, shapes the law.

This keynote grows out of that conversation.

It’s a talk about speech—spoken and unspoken, celebrated and suppressed.

It’s about the forms of expression that shape our political moment and the quieter, everyday forms of expression the law often fails to see. It’s about what we protect, what we silence, and what we misname. In many ways, it is about this newsletter—the need to ground conversations about law and law reform in the lived experiences of ordinary people.

And it begins with a childhood memory.

I share it with you here not simply as a transcript of a public talk, but as an invitation to think alongside me about how culture makes law, how law remakes culture, and what it means to recognize the full spectrum of human expression as worthy of protection.

Cultural Expression as Legal Speech

Today, speech is both everywhere and under siege.

From misinformation and hate speech to book bans and criminal prosecutions, some call this a golden age for expression, and others call it an iron one. Legal systems across the globe are scrambling to define acceptable speech, regulate contested information, and manage the expressive acts that shape our political, cultural, and moral lives.

But as we debate these high-profile flashpoints, I want to begin somewhere quieter. Somewhere personal. I want to begin by telling you about Nolan Brown, and a different kind of speech that matters.

His story reminds us that not all speech looks like speech. Some of the most powerful expressions in American life—Black vernacular, fashion, music, swagger, silence—are too often dismissed by law as noise, defiance, or disorder. But these cultural expressions are not just performances of identity or style. They are acts of meaning-making. They are speech. And our failure to treat them as such reveals the deep limits of how the law sees—and fails to see—Black life.

I didn’t have that language back then.

But I felt it. Even as a young boy, I felt it in the way Nolan moved through the world, and in the way we all watched him move. If Nolan and I were to get into a fight, it would be him—hard shoulders inching toward six feet tall above basketball-sized hands—against me, a pudgy five-foot-five with Harry Potter frames and a sagging JanSport bookbag full of textbooks.

He’d throw hands like a youthful Mike Tyson, and I’d duck and weave on the cracked asphalt like an aging Muhammad Ali, dancing clumsily while admiring his gleaming Nike Air Jordans and oversized Rocawear jeans. Nolan would smack my bicep until its caramel hue matched his dark-brown sheen, and I’d eventually give in, as I so often did, because hanging out with Nolan Brown was kind of a big deal.

Nolan was the kind of kid every Black boy in the South Bronx wanted to become.

Including me.

Beginning with Nolan clarifies how the figure of a person racialized as Black—someone embodying a trendsetting popular culture celebrated by mass media—reveals the paradox of law in a society shaped by liberal capitalism, constitutional republicanism, and a long history of legally sanctioned racial injustice. Black bodies are essentialized on the covers of hip-hop magazines like The Source or XXL—a swaggering aesthetic adorned with modern luxuries—even as Black communities battle socioeconomic landscapes marked by physical decay and human loss.

For Nolan and other Black boys navigating racial bias, stereotypes like chronic misbehavior or disorderly conduct are not just societal narratives—they’re inscribed and reinforced by law. The legal system, shaped by cultural perceptions of Blackness, defines and applies rules in ways that sustain these stereotypes, often cloaking Black boys in the expectation of criminality.

Some scholars like Naomi Mezey have argued that law can reasonably be considered synonymous with American culture. It certainly felt that way to thirteen-year-old me. The beliefs, practices, and norms that governed life in my South Bronx neighborhood carried the weight of law, shaping a world where Black boys were always running from trouble—or at least that’s how it seemed to me.

When Nolan and I broke the neighbor’s car window while playing catch, I ran home convinced I was the innocent one. It was Nolan’s wild arm, his bright idea to turn a dirt road into our field of dreams. And, in my eyes, the evidence was conclusive. Nolan took the blame, confessed to his dad, and was punished with sharp words that faded into quiet sobs. And, by the next day, we were back to playing, Nolan quietly washing away my guilt with a soft smile.

“You mad?” I muttered through burning cheeks.

“Nah, you good,” he said, a sparkle in his brown eyes.

That was the usual ending to our adventures—a brush with legal culture that reinforced stereotypes about the Black racial subject, followed by laughter that restored our individual and collective dignity.

I believe this moment captures something essential about how speech matters in ways that transcend current debates about regulating expression. Nolan’s speech—his cultural vernacular, his embodied presence, his very existence as expressive activity—was already being regulated, suppressed, and instrumentalized by law long before he ever opened his mouth.

As we grapple with new legal tools to combat threats, harassment, and hate speech, we must ask: what forms of expression are we failing to recognize as speech at all?

Robin West once wrote that while we can “read literature for its substantive contribution to our understanding of law,” no analogous approach exists for cultural-legal scholars. In other words, we haven’t read culture deeply enough to enrich our understanding of what law is.

Today, I want to suggest something simple yet urgent: exploring the depths of Black culture and the breadth of Black lived experience—its joy and pain, its poetry and its protest—is essential to understanding what law means in America.

From a literary perspective, reading Black culture alongside legal texts—the approach of law-and-culture—shows how legal meaning is built, lived, resisted, and transformed in daily life. From a jurisprudential perspective, reading the legal system itself as a cultural text—the approach of law-as-culture—helps us see how anti-Blackness and White supremacy are not outside the law, but embedded within its very formation. Culture doesn’t just reflect the law; it makes it.

But can we better understand the nature of law itself through a study of culture?

In other words, is there law in culture, and conversely, is there culture in law?

This approach reframes our current debates about speech regulation. In this era of book bans and prosecutions for expressive activity, the question isn’t just whether law can be read like literature—but how cultural narratives determine what kinds of expression are even recognized as “speech” worth protecting.

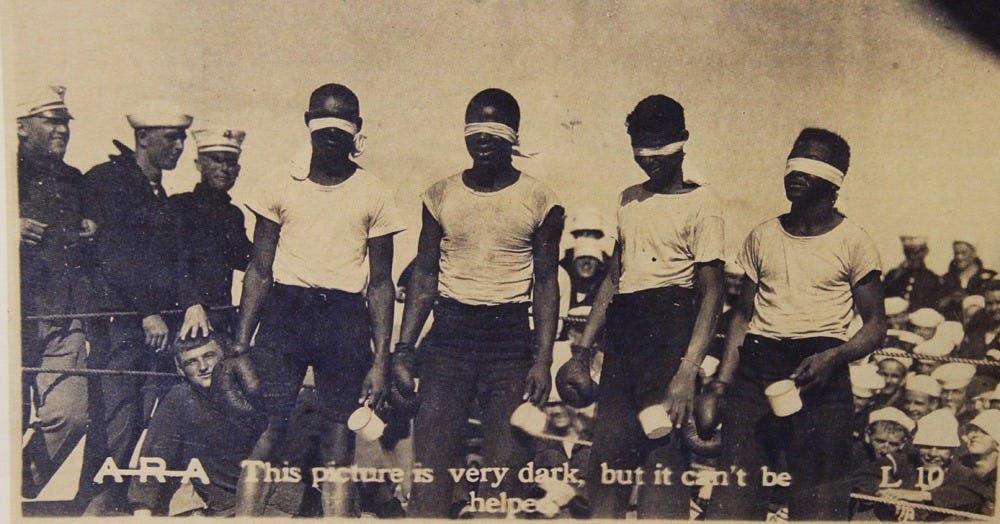

Consider Ralph Ellison’s Invisible Man and its infamous Battle Royal scene. The narrator is invited to deliver his graduation speech to White civic leaders in his community but must first endure a blindfolded boxing match for their entertainment. Ellison reveals American legal culture as driven by “an erotic lust for materialistic self-interest and fleeting self-gratification.” The blindfolded Black boys fight while White men place bets on them, exposing both the spectacle and exploitation that underpin Western legal culture.

Black counternarratives—through the Civil Rights and Black Power movements; the prophetic witness of jazz; the blues sentiment of hip-hop; the communal ethos of DJs, breakdancers, and graffiti artists—have too often been left out of such discourse. Hip-hop, in particular, embodies the endurance of Black radicalism and its engagement with American legal meaning. Yet its message is frequently silenced in political spaces and commodified in the capitalist marketplace.

Perhaps law’s treatment of Black culture reveals less about so-called Black deviance and more about the cultural production of legal meaning. Perhaps the eroticized fantasies of Black life relegated to the social periphery—or to the hidden ballrooms of White supremacy, as Ellison portrayed in his novel, Invisible Man—are, symbolically, central to American law itself.

Reading Black literature in this way reveals what traditional legal scholarship often misses: that law often operates not through neutrality but through racialized spectacle, targeted commodification, and strategic violence.

The weaponization of culture in politics—what Antonio Gramsci called the struggle for cultural hegemony—underscores culture’s central role in liberal democratic debates about rights, privileges, and citizenship. As Raymond Williams once wrote, our political “culture wars” are ultimately battles over “a particular way of life.” Today’s speech regulations must be viewed within this broader context of cultural suppression.

We see this cultural policing historically too.

Take President Reagan’s use of the “welfare queen” trope during his 1970s campaign. It wasn’t framed as a critique of law’s failure to meet the material needs of low-income women. Instead, Reagan used cultural rhetoric to pathologize marginalized people, leading voters to see Black women on welfare as lazy and irresponsible. This narrative fueled racially biased legal reforms that benefited corporations and the wealthy.

Let me offer a concrete example of how cultural policing operates in modern life. When twenty-three-year-old Amadou Diallo—a West African immigrant and street vendor mistaken for a serial rapist—was shot forty-one times by four plainclothes NYPD officers outside his Bronx apartment, my childhood friend Nolan wouldn’t mention it.

Instead, Nolan and I hurried home past the heavyset preacher with permed hair shouting into a megaphone at the protest near the bus stop, only three blocks from the alley where we had broken my neighbor’s car window. Later, Nolan would ask if I wanted to play basketball in the back driveway, or Metal Gear Solid on my PlayStation One.

Even though NYPD Commissioner William Bratton had pledged to “reclaim the streets,” the law in our neighborhood meant surveillance for working-class immigrants and Black folks hustling to make ends meet. It felt safer to lose ourselves in video games and hoop dreams. Our innocence flourished behind chain-linked fences, where polite smiles met patrolling officers who had already judged us guilty by association. Our concrete playground was a holding cell in disguise.

The legal fiction of reasonable suspicion was reaffirmed by Diallo’s death, justified by the perceived threat of his presence. In response, we developed a Black legal culture of fearing and avoiding the police.

And that culture, in turn, became our law.

In class, Nolan and I would rise each morning, our chairs scraping the wooden floor in unison, our right hands pressed firmly over our chests as we pledged allegiance to the flag—a carefully orchestrated lesson in American patriotism. The flag stood for a nation committed to republicanism, liberty, and justice for all.

Yet this daily ritual also served as a balm, soothing the dissonance we felt living in the Bronx. Despite the poverty, crime, and policing surrounding us, the pledge implied that America wasn’t the problem. Instead, it suggested that the source of our inequities lay elsewhere—not in our law, but in our culture.

When we silence cultural voices that expose these contradictions, we lose the ability to see how law truly operates. At thirteen, it often felt like Nolan and I were playing a different game entirely. As neighborhoods around us gentrified—pushing our Black and Latinx families out and bringing wealthier professionals in—we were told the law guaranteed fair housing. As police swarmed our streets, stopping and frisking boys who looked like us while wealthier neighborhoods enjoyed peace, we were told the law protected us from “unreasonable searches and seizures.”

Like Ralph Ellison’s narrator in Invisible Man, I often felt like I was dancing around trouble in the Bronx like an anxious boxer in a crowded ring, eager for my moment to speak, to prove I belonged in the rooms of power. But that dance is not incidental, it is strategic. It preserves law’s illusion of neutrality by muting the voices that expose its cultural and political dimensions.

Today’s attacks on Black and LGBTQ+ authors through book bans, the dismantling of DEI programs, the surveillance of protest culture, and academic restrictions on cultural critique are not isolated events. They are systematic efforts to suppress expressions that challenge dominant legal and political narratives.

What makes this suppression so insidious is that it operates by denying that these forms of expression are speech at all. Hip-hop culture, street vernacular, embodied protest, communal storytelling—these are cast as “conduct” rather than expression, as “disorder” rather than discourse, as incivility or riots rather than reasoned dissent or democratic engagement. The regulatory frameworks that emerge treat cultural expression as something to manage and contain, not protect.

As we develop new legal tools for our contemporary speech challenges, we must confront how law conceptualizes, regulates, commodifies, and instrumentalizes expression in its broadest sense. As Austin Sarat and Thomas Kearns argued, law is unique among cultural institutions because it has “meaning-making” power. The very language of law becomes a communal text, shaping not only what people believe is true, but what they believe is right.

When law frames Black culture and the cultures of other marginalized communities as antithetical to American cultural traditions—instead of integral to its pluralism—it draws fictitious boundaries around what counts as civil, legitimate, or deserving of respect. These boundaries determine who is seen as an insider and who is kept outside, what’s considered “civil” modes of human engagement, while masking the fundamental role that culture plays in shaping law’s meaning and legitimacy.

Beyond the legal academy, cultural-legal studies links directly to broader social movements that recognize the intertwined nature of law, culture, and power. Movements for racial justice, labor rights, environmental justice, and reproductive rights have long understood that legal change is not simply about passing new laws and policies but about shifting cultural narratives that justify systems of oppression.

Take the Movement for Black Lives. Its work is not limited to advocating policy reforms. It’s also about reimagining the very purpose of policing. By reframing policing as a tool of racial and economic control rather than public safety, it challenges the cultural assumptions that sustain criminalization and legal violence.

In this political moment—flooded by disinformation, besieged by far-right speech, and defined by the suppression of marginalized voices—we need tools that account for the full spectrum of human expression. The law-and-culture conversation can no longer be confined to elite institutions. It must extend to communities whose expressive lives are denied legal recognition as “speech” worthy of constitutional protection.

The narrator in Ellison’s Battle Royal is forced to confront the bitter truth that his scholarship—once viewed as a symbol of success within the confines of Jim Crow America—might actually be a tool of subjection. In a dream, while attending a circus with his grandfather, he finds himself opening an endless series of envelopes until he discovers a single note that reads:

“To Whom It May Concern… Keep This Nigger-Boy Running.”

I, too, have come to realize that there is a part of my own story—and my scholarship—that feels inherently devious. I carry a quiet awareness that Black culture often feels like a hopeful attempt to conjure laughter in the midst of America’s ongoing circus. A joke told to keep from crying. A dance performed until the pain of Black letter law is momentarily eased.

If there is one idea I hope you carry with you, it is this: law is never neutral.

It is shaped by culture—and in turn, it shapes how we understand power, justice, and democracy. Law doesn’t live only in statutes or courtrooms; it’s embodied, narrated, contested. It is a language of power, and it can be used to either oppress or liberate.

Legal discourse too often presents law as detached from the social forces that give it life. But culture—through stories about crime, race, poverty, and belonging—guides not only public perception, but legal doctrine itself.

Legal transformation is always cultural transformation.

That means the fight for free speech is not simply about preserving rights—it is about expanding recognition: of whose voices matter, whose stories are heard, and whose truths are protected.

If we are serious about justice, we must critique not only the legal texts that constrain us but also the cultural narratives that justify our subjugation. We must ask not just what the law says, but what it silences.

And that requires courage.

Courage to listen. Courage to imagine otherwise.

Courage to speak when the law forgets how to.

Thank you for reading and thinking alongside me.

If this keynote resonates, I hope it sparks conversations in your own circles about how law is lived, felt, and shaped from the ground up.

As always, I’m grateful to share this space with you.

In solidarity,

Blamed for the nation’s historic measles outbreak, West Texas Mennonites have hardened their views on vaccines Lindsey Byman, The Texas Tribune December 17, 2025

SEMINOLE — When Anita Froese’s middle daughter came down with fatigue, body aches and the tell-tale sign of measles — strawberry-colored spots splattered across her skin — she waited it out. Two days later, her son developed the same symptoms. After a week, the disease finally reached her youngest daughter, who vomited all night as her fever spiked to 104.

Froese never brought her children to a doctor. Instead, she administered cod liver oil, vitamins, tea and broth. She refreshed their cold compresses and ran them epsom salt baths. She brought them to a holistic health center for an IV treatment used for heavy metal poisoning.

None of her kids are fully vaccinated against measles. She stopped immunizing her first two as infants after hearing stories about others who had bad reactions to the shots, and she approved no shots for her third. Even as an outbreak ripped through her community, Froese preferred that her children contract measles to build natural immunity because to her, measles was on par with the flu.

“It seemed like this was a disease that had come up now and was this big deal,” said Froese, who was vaccinated as a child. “To me, that wasn’t the case.”

But outside of the West Texas town of 7,000, health experts watched in horror as the once-dormant disease spread like a drop of blood in water across Seminole and the outlying counties, hitting at least three other states and breaching international borders. It killed two children, both Mennonites like Froese. It sickened at least 762 Texans — more than half of whom live in Seminole’s Gaines County — and hospitalized 99 statewide. The West Texas outbreak was the nation’s largest in more than 35 years.

But for the Mennonites at the center of it, the scrutiny was worse than the disease itself. Today, Froese and others say they’re no more likely to get vaccinated, and they’re even less trusting of the government and health officials who they feel targeted them and blamed them for causing the outbreak.

Mennonites questioned why measles forced their religious community into the national spotlight. They didn’t know why TV crews clamored to film them grieving little girls who they believed died from underlying conditions or negligent hospitals rather than measles. They didn’t understand the messages from outsiders demanding they leave the country for exercising their right to not vaccinate.

“You’re looked at as this ignorant people that’s almost fueling this thing, like we’re having measles parties, and that was never the case,” said Pastor Jake Fehr of Mennonite Evangelical Church.

Vaccine hesitancy has been brewing for the last 20 years among Mennonites, a cloistered Christian sect with a historical distrust in government. Pandemic-era mandates brought that to a boil in Seminole’s Mennonite community and across Texas.

The religious group is a microcosm of the distrust in vaccines gripping the state. Twice as many Texas parents exempted their kindergartners from measles vaccines this year compared to five years ago, with Gaines County among the highest at almost 20% of its kindergartners being exempt, compared to the state average of less than 4%. Seminole’s vaccination rate is likely far lower when it includes the Mennonites who are homeschooled.

Among the world’s most infectious diseases, measles causes rash and flu-like symptoms and was for many years rare in the U.S. because of widespread vaccination. Especially in young children, measles can cause complications like blindness, brain swelling and even death. Two doses of the measles vaccine are 97% effective at avoiding the disease, according to the U.S. Center for Disease Control and Prevention.

Texas health officials declared the outbreak over in August — ending an event Froese thinks was inflated from the start.

“I know of plenty of people that had measles when they were children, and they all survived,” Froese said. “To me, that was a risk I was willing to take.”

As measles tore through his community last winter, John Peters, 54, feared the disease was causing his pallor, ringing ears, body pain and fatigue.

In April, after his Mennonite mettle crumbled against his wife’s demand that he seek help, he finally saw a doctor.

He didn’t have measles. He had leukemia.

Peters got seven blood transfusions in a week, and six more over the next three months. When he returned from a hospital stay in the spring, he regretted high-fiving a blotchy child at the grocery store. He changed his immigration consulting firm to appointment-only and asked clients to wash their hands and stay home if they had been around sick people.

“I had zero immunity,” he said. “I could not afford to get measles.”

Peters, who trusts mainstream medicine, considers himself a modern Mennonite. He wears a goatee and a Texas Tech University ring, which traditional Mennonites consider vain. He owns 17 guns even though Mennonites are pacifists. Despite his neighbors avoiding the public eye, Peters is a town celebrity because he hosts a weekly radio show and pens monthly columns in the local newspaper.

His mother grew up in a Mennonite colony in Mexico and combined natural and Western medicine. She administered Tylenol and Vicks VapoRub, smeared pig lard on her children’s chests to relieve congestion and believed Dr. Pepper was a cure-all.

Mennonites are predisposed to questioning vaccine mandates. Their history of persecution from political and religious authorities has created a culture of distrust in the government. The Mennonite movement broke from Anabaptists in 16th century Northern Europe, moving through Russia, Canada, Mexico and the U.S. in sequestered communities — Peters estimates that a third are undocumented. Many Mennonite women in Seminole still know only Low German, which is spoken in Northern Germany and parts of the Netherlands.

Despite valuing traditional remedies, Peters’ mom was vaccinated as a child and she would later immunize her children, including Peters. She fell in line with much of her generation of Mennonites.

“You can’t argue the fact that vaccinated people fight measles better,” Peters said, adding that he vaccinated his two daughters after doing research and talking to doctors.

Peters’ take on health care is a product of both his past and present.

Against his doctors’ advice, Peters drank a fruit juice that Mennonites insisted would cure his cancer and which he said tastes like rotten cherries. He drew the line at offers from friends and another leukemia patient to take the anti-parasitic drug ivermectin, opting to give his $15,000 monthly prescription a chance.

He appreciates unorthodox approaches to medicine — like Health and Human Services Secretary Robert F. Kennedy Jr. promoting vitamin A to treat measles — and he speculates that natural remedies could be as effective as vaccines.

But, he wishes more of his community vaccinated because he knows vaccines eradicated polio. Before the measles shot became available in 1963, the disease killed 400 to 500 American children each year. Peters believes modern medicine is why he’s here today.

“The hospital system saved my life,” he said.

Even as the measles outbreak endangered him in his vulnerable condition, it also solidified his views against forced immunizations. He wondered whether his two rounds of COVID shots could have caused his leukemia.

This year, pushes for Mennonites to vaccinate and quarantine gave Peters flashbacks to the COVID-19 pandemic.

Already opposed to government rules, Mennonites bristled at pressure from authorities to stick out their arms during the 2020 outbreak.

“The pro-vax crowd, I think in my opinion, has kind of messed up,” Peters said. “If you’re living in the land of the free and you pretty much have to get vaccinated, to the third generation Mennonites — the kids that grew up here — that just doesn’t sound right.”

Aside from the splotched children in restaurants and Walmart, Seminole felt unremarkable to Froese as measles cases ticked up and her town became a nightly feature on news programs.

Froese saw her sister visiting from Kansas, swapped health supplies with another sister and cared for her nephew who came down with an unknown illness. She skipped only one Sunday mass when her teens were sick.

She went about life normally because she believed measles wasn’t a threat to her family.

“As sick as they were, they’ve been just as sick with other things that they’ve had in the past, just then they didn’t have the rash,” she said. “And they got it, they got over it, and we went on with life.”

She disavowed vaccines after hearing about children of people she knew who were never the same after they received the shot: a young boy gone blind, and a girl who seized and foamed at the mouth, becoming a quadriplegic, she said. Local Mennonite shop owners, church-goers and pastors cite similar stories, saying the risk isn’t worth the immunity.

Studies have proven time and again that vaccines have a low risk of severe complications, though mild effects are common as the body builds protection.

It’s impossible to know whether vaccines caused these maladies without the patients’ full medical history, said Wesley Friesen, a Mennonite operating room nurse at the Seminole Hospital District.

“You want to trust that what they’re telling you is true. But sometimes you wonder, what’s the whole story?” Friesen said, expressing skepticism about whether serious vaccine complications resulted from the medicine. “There are individuals that did experience negative side effects, probably, you know, for decades. But you have to look at the whole picture. I mean, are they basing their decision on a relatively small percentage?”

As measles spread, local health food stores began distributing unconventional treatments including free cod liver oil and budesonide inhalers, which are typically used for asthma, while vitamin A flew off the shelves. Mennonites reached for wonder oil — herb-infused rubbing alcohol to reduce fevers — and supplements that claim to enhance various bodily functions.

Though some Seminole residents got vaccinated amid the outbreak, drive-by vaccine tents largely sat dormant.

Like Peters, Froese also believes COVID turned more Mennonites off vaccines.

She thought authorities overreacted to scare people into getting immunized. The restrictions felt overbearing and punitive: A local hospital limited visits, leaving Froese’s children to gaze at their cancer-ridden grandmother through the window for what they thought would be the last time. She was alarmed when a hospital refused to administer ivermectin to her father-in-law, though global health authorities recommend against treating COVID-19 with ivermectin.

“I know when you’re dealing with something that you don’t understand, you know, for the doctors, even they have to do something that they then think works,” Froese said. “But again, I think COVID was blown out of proportion.”

And so was the measles outbreak, she said.

After recovering, her daughters shed hair for two months and one developed an acne-like condition that vitamins couldn’t treat, but antibiotics did. Measles can cause “immune amnesia,” where the body forgets how to fight infections for months to years, but Froese questions whether the after effects of measles are as bad as doctors and public health authorities have made them out to be, and whether the skin condition was related to measles at all.

She’s proud of how her community responded to the outbreak and now believes more strongly that they can fend off diseases without official help.

“We were getting so much media attention and blame for all this kind of stuff,” Froese said. “And I think we all just decided that we would rally together and get through it.”

On a recent Sunday, more than 170 cars filled the gravel parking lot of the Mennonite Evangelical Church in Seminole. In a pew facing walls decorated with two plain wreaths, a man in scuffed cowboy boots wrapped his arm around his wife, who wore an ankle-length velvet dress, emerald green.

Their five children had recovered from measles, and in July, one daughter won gold in the national tumbling competition. The disease was behind them.

In early December, almost a year from the start of the outbreak, 17 new cases appeared in the U.S. If these strains share DNA with those in West Texas, the country might follow Canada in losing its measles elimination status, which it’s had for 25 years.

At least in Seminole, people are safe from another measles event because they’ve either been vaccinated or fought the disease, said Dr. Wendell Parkey, chief of staff for Seminole Memorial Hospital.

But he’s now staring down the barrel of a different vaccine-preventable outbreak: whooping cough. He thinks all the medical community can do now is adapt their practices to prepare for more sick people each year.

“I don’t want a society like this. I’d rather be in a society that vaccinates,” Parkey said. “But you don’t get a choice on playing that game.”

Health officials spread the word about the importance of measles vaccines in person and through the radio, newspapers and churches. Local doctors and Mennonite experts fear the town’s anti-vaccine camp will respond the same to the next outbreak unless authorities learn to speak their figurative — and sometimes literal — language to answer their questions and build confidence.

While Seminole residents circulated messages to avoid reporters during the outbreak, John Dueck, editor of the Canada-based newspaper Die Mennonitische Post, became a de facto Mennonite expert for government officials and news media.

Dueck published an editorial explaining the facts about measles and vaccines in terms that appealed to Mennonite values about protecting the community. He said Mennonites and the Canadian government gave him positive feedback.

“If you come into a community just in time when everything is burning, you will find people nervous,” he said.

But they might be more receptive to messages from authorities about vaccines and health scares if they already formed a relationship during calm times, he added.

Seminole doctors worry that will be tough after the measles outbreak whittled what scant trust remained among the vaccine hesitant community.

While some Mennonite families got vaccinated during the outbreak, Friesen said health messaging fell short because it came across as orders. He said a better approach is to teach people how vaccines work and invite questions.

“I guess we haven’t figured that out yet,” Friesen said. “Nothing has changed, and I don’t think it’s going to change for a long time.”

This article first appeared on The Texas Tribune.

The Evil Genius of Fascist Design: How Mussolini and Hitler Used Art & Architecture to Project Power

An look at Architecture as propaganda.

in Architecture, History, Politics | December 17th, 2025

When the Nazis came to power in 1933, they declared the beginning of a “Thousand-Year Reich” that ultimately came up about 988 years short. Fascism in Italy managed to hold on to power for a couple of decades, which was presumably still much less time than Benito Mussolini imagined he’d get on the throne. History shows us that regimes of this kind suffered a fairly severe stability problem, which is perhaps why they needed to put forth such a solid, formidable image. The IMPERIAL video above explores “the evil genius of fascist design,” focusing on how Hitler and Mussolini rendered their ideologies in art and the built environment, but many of its observations can be generalized to any political movement that seeks total control of a society, especially if that society has a sufficiently glorious-seeming past.

Fascism’s visual language has many inspirations, two of the most important cited in the video being Romanticism and Futurism. The former offered “a longing for the past, an obsession with nature, and a focus on the sublime”; the latter “worshiped speed, machines, and violence.” Despite their apparent contradiction, these dual currents allowed fascism “a peculiar ability to look both backward and forward, to summon the glory of past empires while promising a radical new future.”

In Italy, such an empire may have been distant in time, but it was nevertheless close at hand. “We dream of a Roman Italy that is wise and strong, disciplined and Imperial.” Even Hitler drew from the glories of ancient Rome and Greece to shape his own aspirational vision of an all-powerful German civilization.

Hence both of those dictators undertaking large-scale Neoclassical-style architectural projects “to bring the aesthetics of ancient Rome to their city streets,” including even muscular statues meant to embody the officially sanctioned human ideal. Of course, the builders of the United States of America had also looked to Roman forms, but they did so at a smaller, more humane scale. Fascist structures were designed not just to be eternal symbols but overwhelming presences, intended “not to elevate the soul, but to crush the individual into the crowd and promote conformity.” This, in theory, would make the citizen feel small and powerless, but with an accompanying quasi-religious longing to be part of a larger project: that of fascism, which subordinates everything to the state. For the likes of Mussolini and Hitler (an artist-turned-politician, as one can hardly fail to note), aesthetics was power — albeit not quite enough, in the event, to ensure their own survival.

Reflections on morality

Language as a Social Cue From Effectiviology

Forwarded this email? Subscribe here for more

Language as a Social Cue

This week’s email is about how the language that people use shapes our perception of them (and vice versa).

Here are the key practical points you should know (mainly from this research article):

-

People’s language has fundamental social meaning in the eyes of others.

-

We use various aspects of other people’s language to categorize them, like when we perceive someone as low or high status based on what vocabulary they use, what accent they have, or even what language they speak.

-

We often essentialize language groups, meaning that we view speakers of different languages as being fundamentally different when it comes to factors other than their language.

-

When using language as a social cue, we often display in-group favoritism, by preferring those who speak like us, and attention to status, by preferring those who speak in a way that’s associated with a higher status.

This can be useful for understanding both how others perceive us and how we perceive others.

As always, I’m happy to hear your thoughts.

Have a great week,

Itamar